Why Headcount Won't Fix Your Documentation Debt

Three products. Three codebases. Three teams that each built their own way of doing things. And somewhere in the middle of all that, a documentation problem that grows faster than any hiring plan can fix.

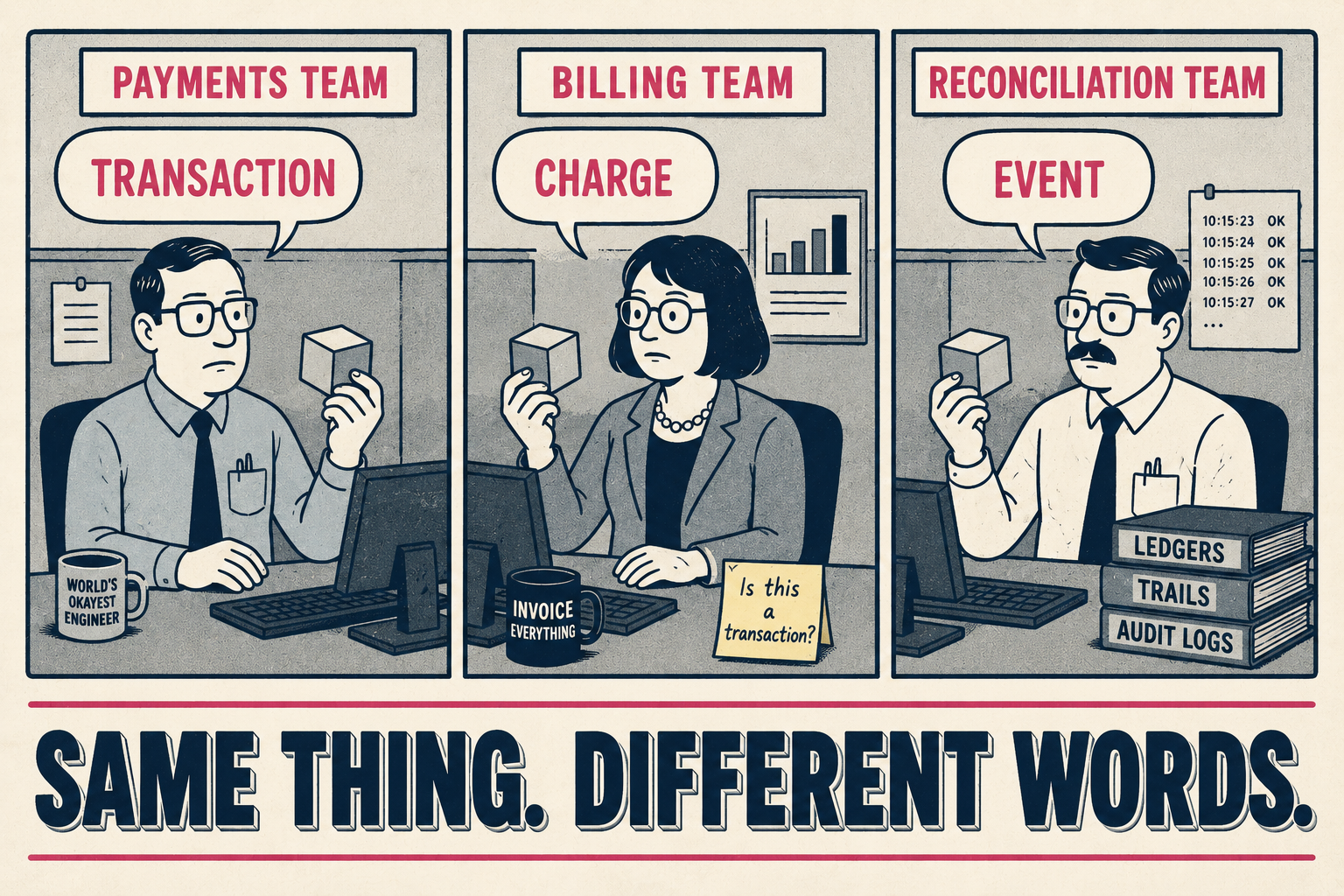

This is the situation most engineering leaders in multi-product companies eventually arrive at. Not because anyone made a bad decision, but because products built independently accumulate independent habits. The payment team calls it a "transaction." The billing team calls it a "charge." The reconciliation team calls it an "event." All three are talking about the same thing. None of the documentation says so.

The instinct is to hire more technical writers. The instinct is wrong. More writers don't fix a structural problem — they just distribute the confusion more evenly. The real fix is a documentation architecture that generates artifacts from the engineering activity already happening, governed by a small team with the right tools and authority to enforce consistency across all product lines.

That's the framework. Here's how to build it.

Phase 1: Audit What Exists Before You Standardize Anything

The first mistake most teams make is jumping to tooling before they understand what they have. A non-standardized documentation landscape typically includes multiple structure logics across product lines, inconsistent naming conventions and terminology, redundant modules written independently by different teams, and content stored across SharePoint, drives, legacy systems, and local folders — with manual copy-paste processes to keep any of it aligned. That's not a description of a broken system. That's a description of how most multi-product companies actually operate.

Start with an inventory. Map every documentation asset — API references, quickstarts, guides, changelogs, internal wikis — and categorize them by type, product, and owner. The Diataxis framework is useful here: it separates tutorials, how-to guides, reference material, and explanations into distinct categories, which makes it easier to spot where you have duplication and where you have gaps.

Then do something most teams skip: read the documentation as a new developer would. Walk through the quickstarts step by step. Follow the API reference against a real integration. You'll find the inconsistencies immediately. The payment team uses one authentication pattern; the billing team uses another. The reconciliation service has no quickstart at all. The shared authentication library is documented three times, differently, in three separate places.

The decisions you need to make at this stage are:

- What terminology conflicts need to be resolved, and who has authority to resolve them?

- Which content is genuinely product-specific versus which content is duplicated shared infrastructure?

- Where is the single source of truth for cross-product workflows, and if it doesn't exist, where should it live?

The common mistake here is treating the audit as a writing project. It isn't. It's an information architecture project. The output isn't better prose — it's a map of what exists, what overlaps, and what's missing. That map is what you use to make the next set of decisions.

One more thing worth naming: the cost of not doing this. Employees spend an average of 3.6 hours every day searching for information at work, a number that has increased by a full hour over just one year. For an engineering organization, that's not just a productivity problem — it's a compounding one. Every new hire who can't find the right documentation learns from whoever sits nearest to them, which means the tribal knowledge problem gets worse with every headcount addition, not better.

Phase 2: Consolidate Workflows Without Forcing False Uniformity

The second mistake is assuming that consolidation means making everything the same. It doesn't. A payment product and a developer tooling product have genuinely different documentation needs. The goal isn't uniformity — it's interoperability. Shared standards where they matter, product-specific flexibility where it doesn't.

The practical version of this looks like: one style guide, one terminology glossary, one shared component library for things like authentication flows and error codes, and then product-specific workspaces that inherit from those shared foundations. A central knowledge base lets teams reuse common content while maintaining tone, structure, and accuracy through shared templates — and dedicated workspaces enable product-specific customization without creating a new silo.

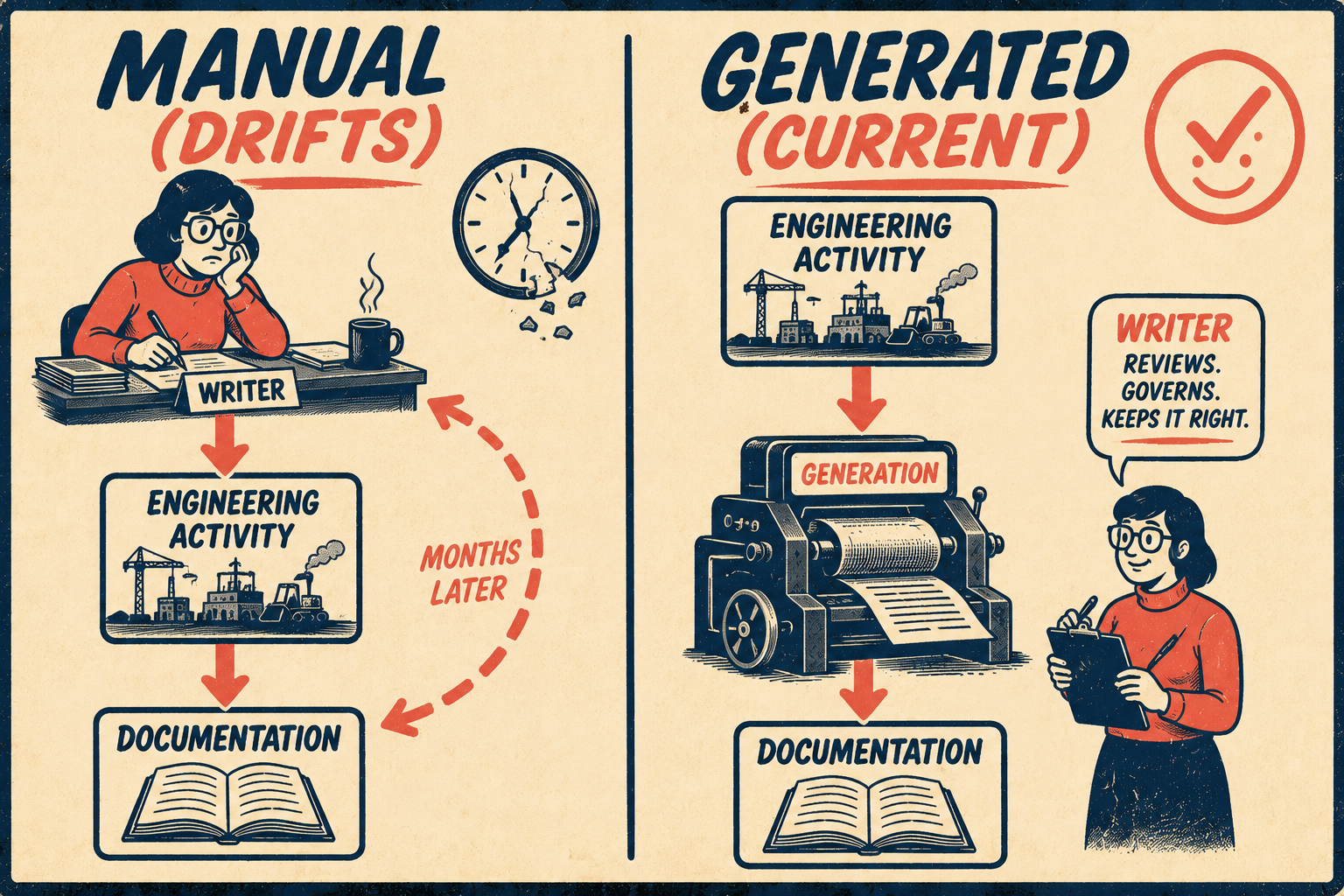

The tooling decision matters here, but it's secondary to the process decision. The question isn't which tool to use — it's whether your documentation lives where your engineering work happens. Treating documentation like code means placing it under source control, assigning clear ownership, requiring reviews for changes, and tracking issues as bugs. When documentation lives in the same workflow as the code it describes, it gets updated when the code changes. When it lives somewhere else, it drifts.

This is the core of the documentation drift problem. Drift between design, implementation, and documentation is one of the most persistent challenges in software engineering — and it compounds in multi-product environments because there are more surfaces to drift across and fewer people watching all of them. The standard response — ask writers to chase engineers for updates — doesn't scale. It produces documentation that's always slightly behind, always slightly wrong, and increasingly distrusted.

The better approach is to generate documentation directly from the engineering activity that's already happening. Release tags, API definitions, commit patterns — these are the ground truth. GitHub's automatically generated release notes, for example, pull directly from merged pull requests and contributor activity, providing an automated alternative to manually writing release notes after each release. Similarly, Stripe decoupled code and content by building Markdoc, an authoring format that allows writers to use interactive components and page logic without mixing code into the content, ensuring that API references and product docs remain maintainable at scale. The same principle applies to API references generated from OpenAPI specs, changelogs generated from commit conventions, and architecture notes generated from code structure.

When documentation is generated from engineering activity rather than written after the fact, it stays current by construction. The writer's job shifts from producing content to governing it — which is a much more scalable role.

This is where Doc Holiday fits naturally into the workflow. It generates the core documentation artifacts — release notes, API references, changelogs — directly from those engineering signals, then provides the infrastructure for a lean team to validate, edit, and manage output across all product lines from a single system. The generation happens automatically; the governance happens intentionally.

Phase 3: Validate at Scale Without Reviewing Every Line

Once you're generating documentation at scale, the bottleneck shifts. The problem is no longer production — it's quality assurance. And this is where most teams make the third mistake: treating validation as a writing problem when it's actually a content operations problem.

A writing problem gets solved by hiring more writers. A content operations problem gets solved by building better infrastructure for the writers you have.

Engineering turnover in tech runs 15-20% annually. For a 20-person team, that's 3-4 people leaving every year, each one taking undocumented context with them. The standard response is to hire replacements and wait for them to ramp up. But the real cost isn't onboarding time — it's the decisions that get re-litigated because nobody wrote down why they were made the first time. Documentation that's generated from engineering activity captures the what automatically; the governance layer is what ensures the why doesn't get lost.

The shift that makes this work is moving your most experienced technical writer upstream. Technical writing is shifting from producing documents to designing knowledge systems — writers are increasingly responsible for structure, reuse, metadata, workflows, and lifecycle thinking, not just polished pages. One senior person with the right infrastructure can set standards, audit generated outputs, flag edge cases, and govern the system across all product lines. That's a fundamentally different job description than "write the release notes for three products."

The practical version of this looks like: the senior writer defines the style guide and terminology glossary that govern all generated output. Automated linters enforce those standards at the point of generation. The writer reviews flagged exceptions — content that falls outside the expected patterns — rather than reviewing everything. Over time, the exceptions become training data for better generation, and the review burden decreases rather than growing with the product portfolio.

This is the force multiplier model. One senior technical writer governing a system that generates and validates documentation across three products is more effective than five junior writers manually producing and maintaining documentation for one. The leverage comes from the infrastructure, not the headcount.

The validation problem, properly framed, is this: you need a system that generates accurate content from engineering signals, enforces consistency through automated standards, and surfaces exceptions for human review. That's a content operations challenge. The solution is giving your most experienced person the tools to govern the system — not giving five less experienced people the task of manually keeping up with it.