Your Engineering Team is Shipping Faster Than Your Docs Can Follow. Here's What to Do About It.

It's a Tuesday morning sync. Your customer success lead is going through the support queue out loud. Every third ticket is some version of: "We didn't know this feature existed." Or: "How does this new thing work?" Or, the one that stings a little more: "We thought this was broken."

The feature shipped three weeks ago. The engineer who built it is already two sprints ahead. Nobody wrote the release note. Nobody had time.

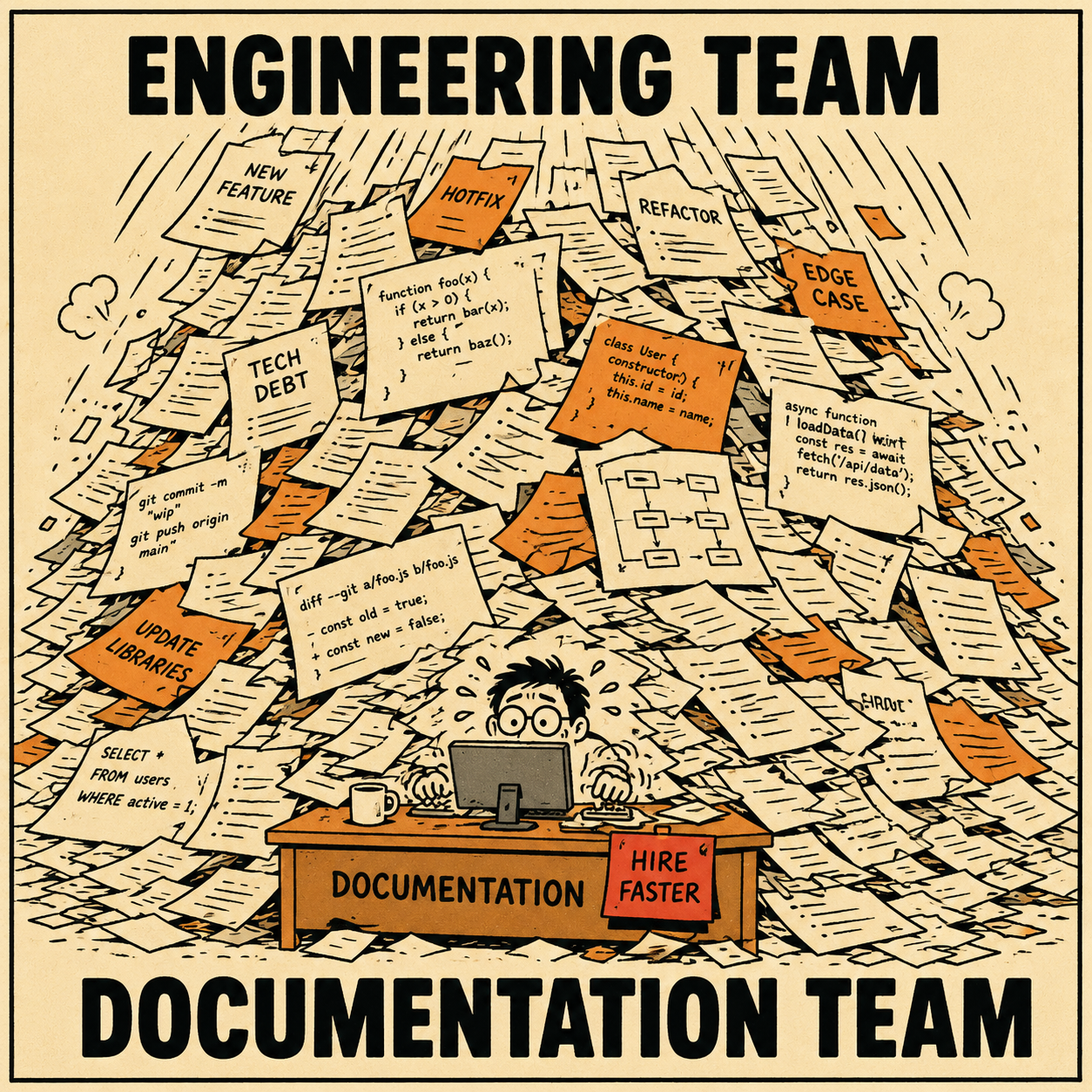

This is the documentation gap at Series A and B, and it's not a writing problem. It's a production problem. Your team is generating code faster than any human process can convert it into customer-facing information. The result is a growing pile of shipped features that customers don't know about, a support queue full of questions that shouldn't exist, and a product team that's become the de facto human changelog for the entire company.

The fix isn't to write faster. It's to change where the writing comes from.

Why Hiring Doesn't Fix This

The instinct is to hire someone. A technical writer, a content manager, a documentation lead. And eventually, that hire is probably right.

But at 40 or 60 or 80 people, you're shipping daily. A single technical writer, even a good one, can clear maybe 200 to 300 pieces of documentation a year. A 50-person engineering team shipping daily can generate more documentation debt in a quarter than that. You're not behind because you're understaffed. You're behind because the production rate of documentation debt is fundamentally higher than the production rate of any human documentation process.

David Nunez, who led documentation at both Stripe and Uber, has watched this cycle repeat at company after company. The engineering team grows faster than the documentation team can keep up. The result is that early engineers get bombarded with questions, because asking them is faster than finding written answers that don't exist (Nunez, 2022). The heroes of the company stop building and start answering.

Hiring into that problem means you're always a quarter behind. You hire a writer, they clear the backlog, the backlog grows back, you hire another writer. The only way out is to change the ratio between how fast documentation debt accumulates and how fast it gets resolved.

Automation changes that ratio.

What the Automation Layer Actually Does

The core idea is straightforward. Your engineering workflows already contain most of the information that goes into a release note. A pull request has a title, a description, a linked ticket, and a diff. A Jira ticket has acceptance criteria, a summary, and a status history. That metadata is not documentation, but it is the raw material for documentation.

What AI does is translate that raw material into a first draft. Not a perfect draft. A starting point.

YugabyteDB built exactly this kind of pipeline, using GPT-4 to process issue summaries and commit messages into user-facing release notes. Their engineers found that keeping context short (around 5,000 characters per issue) produced higher-quality output, and that the hardest part was prompt design, not engineering (Yugabyte, 2024). The system doesn't replace the judgment call about what matters to a user. It handles the translation from technical change to readable sentence, which is the part that takes time.

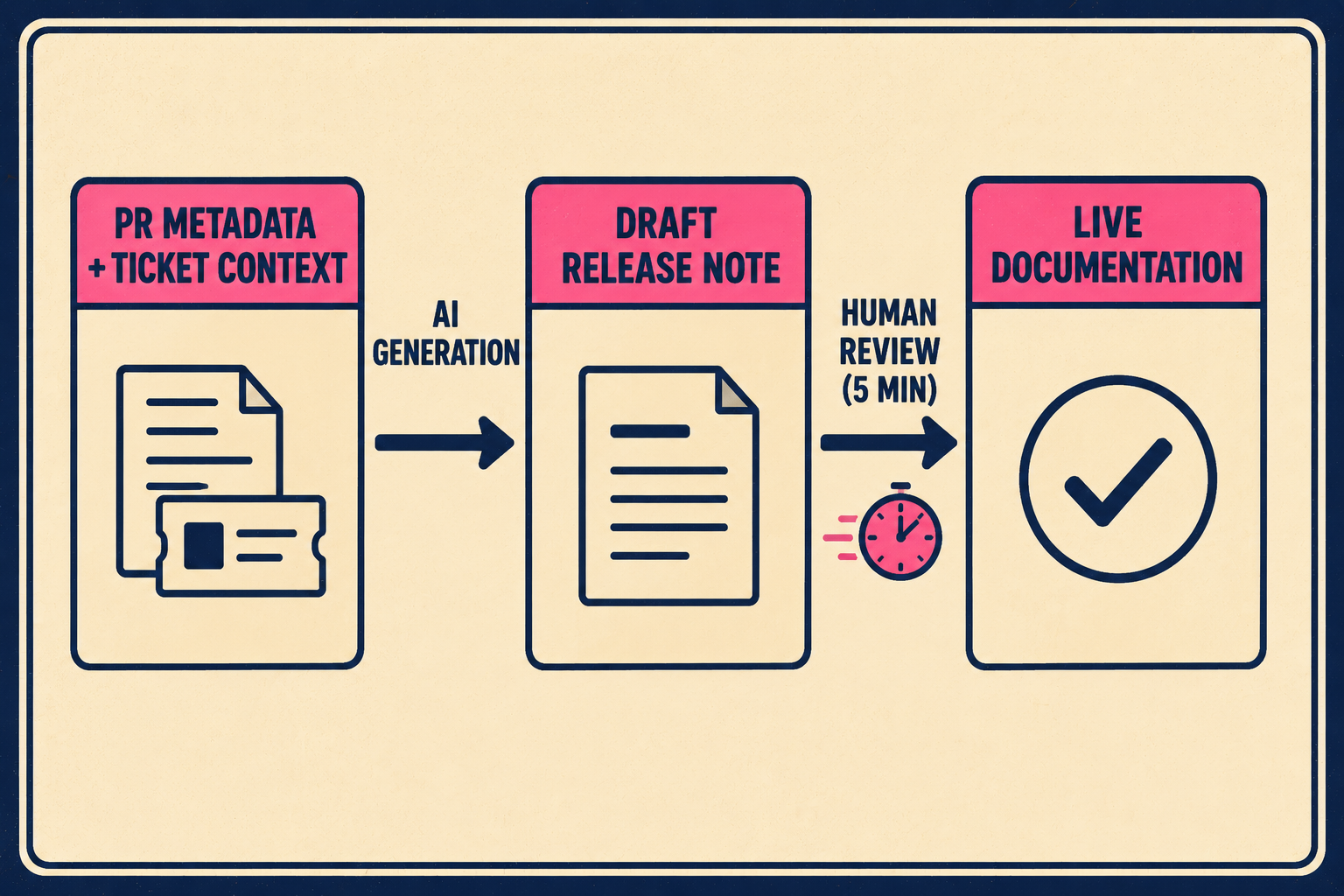

Ascend.io built a similar system using GitHub Actions and OpenAI, triggering on commits and generating structured release notes with features, improvements, and bug fixes separated into sections. Their pipeline creates a pull request in the documentation repository, so a human reviews and approves before anything goes live (Ascend.io, 2025). The human is still in the loop. They're just reviewing a draft instead of writing from scratch.

That distinction matters. A product manager or technical lead reviewing a 150-word draft takes five minutes. Writing that same draft from a ticket and a PR description takes thirty. Multiply that across every release, every sprint, every quarter, and the difference is whether documentation happens at all.

The research on documentation in continuous software development is consistent on this point: the challenge isn't that teams don't value documentation, it's that the cost of producing it in real time is too high relative to the immediate pressure of shipping (Theunissen et al., 2022). Automation lowers that cost to a level where it actually gets done.

Anyway. The question isn't whether this works in principle. It works. The question is what it requires from the team doing it.

What Good Looks Like at This Stage

The workflow that actually functions at a 50-to-100-person company looks like this: the engineer ships a feature. The system pulls the PR metadata and ticket context, generates a draft release note, and routes it to a product manager or technical lead for review. That person spends a few minutes checking for accuracy, adjusting the framing for a customer audience, and approving. The note goes live the same day.

That's it. The engineering team doesn't change their workflow. The review step is light enough that it doesn't become a bottleneck. And the output is consistent, because the generation layer is consistent.

The DORA research from 2022 found that documentation quality doesn't just make teams feel better organized. It amplifies the performance impact of every technical capability a team implements. Teams with above-average documentation quality see a 656% lift in organizational performance from continuous delivery, compared to 63% for teams with below-average documentation quality (DORA, 2022). The documentation is load-bearing infrastructure, not a nice-to-have.

What changes when this works: customers see what shipped. Support gets fewer "what's new" questions. The product team stops being the human changelog. The feedback loop between shipping and customer understanding tightens from weeks to hours.

For the technical writer you eventually hire, this is also a better job. Managing templates, training the system on edge cases, reviewing for architectural consistency, catching the places where the AI got the framing wrong for a particular customer segment. That's a much better use of senior writing talent than grinding through changelogs. Mercari's engineering team found the same thing when they applied AI to their documentation backlog: the bottleneck shifted from production to governance, and governance is where human judgment actually matters (Mercari, 2025).

The GitHub Conventional Commits specification is worth knowing here, too. When commit messages follow a structured format, the signal available to the generation layer is much richer. feat:, fix:, chore: prefixes aren't just style conventions; they're metadata that makes automated categorization more accurate (Conventional Commits, 2020). GitHub's own automatically generated release notes use PR labels and merge history to do the same thing (GitHub Docs, 2024). The more structured the input, the better the output.

None of this requires a full documentation org. It requires a system that generates from the engineering workflow, and a lean team that validates and refines the output. Teams at this stage can build exactly that workflow using Doc Holiday, which pulls release notes, changelogs, and API references directly from engineering activity and gives the reviewers a structured interface to catch what needs fixing before it goes live, without waiting to staff a documentation org first.